Overview

Alephant AI Gateway is an OpenAI-compatible control layer for production AI applications, available as hosted SaaS or as a self-hosted gateway. It gives developers one stable API surface while the gateway handles provider-specific adaptation, model routing, policy enforcement, layered caching, retries, fallback, usage metadata, request logging, and audit trails.

Instead of wiring every application directly to every provider, teams connect once and route across 50+ providers, 320+ models, and custom model backends. Start with Alephant Cloud for a managed workspace, or self-host the gateway when you need private infrastructure, BYO keys, and direct operational control.

Why this exists

AI applications are moving from single-model prototypes to production systems that call many providers, agents, tools, and custom model backends. Without a gateway, every team ends up rebuilding the same operational layer: provider adapters, routing rules, key management, usage metadata, retries, caching, and request logs.

Alephant AI Gateway centralizes that layer behind one OpenAI-compatible API. It gives developers a stable integration surface while platform teams get policy before provider access, cache before repeated calls, fallback before outages, and audit trails before production incidents.

The goal is simple: make AI traffic observable, governable, and reliable without slowing developers down. Learn more ->

Features

Developer surface

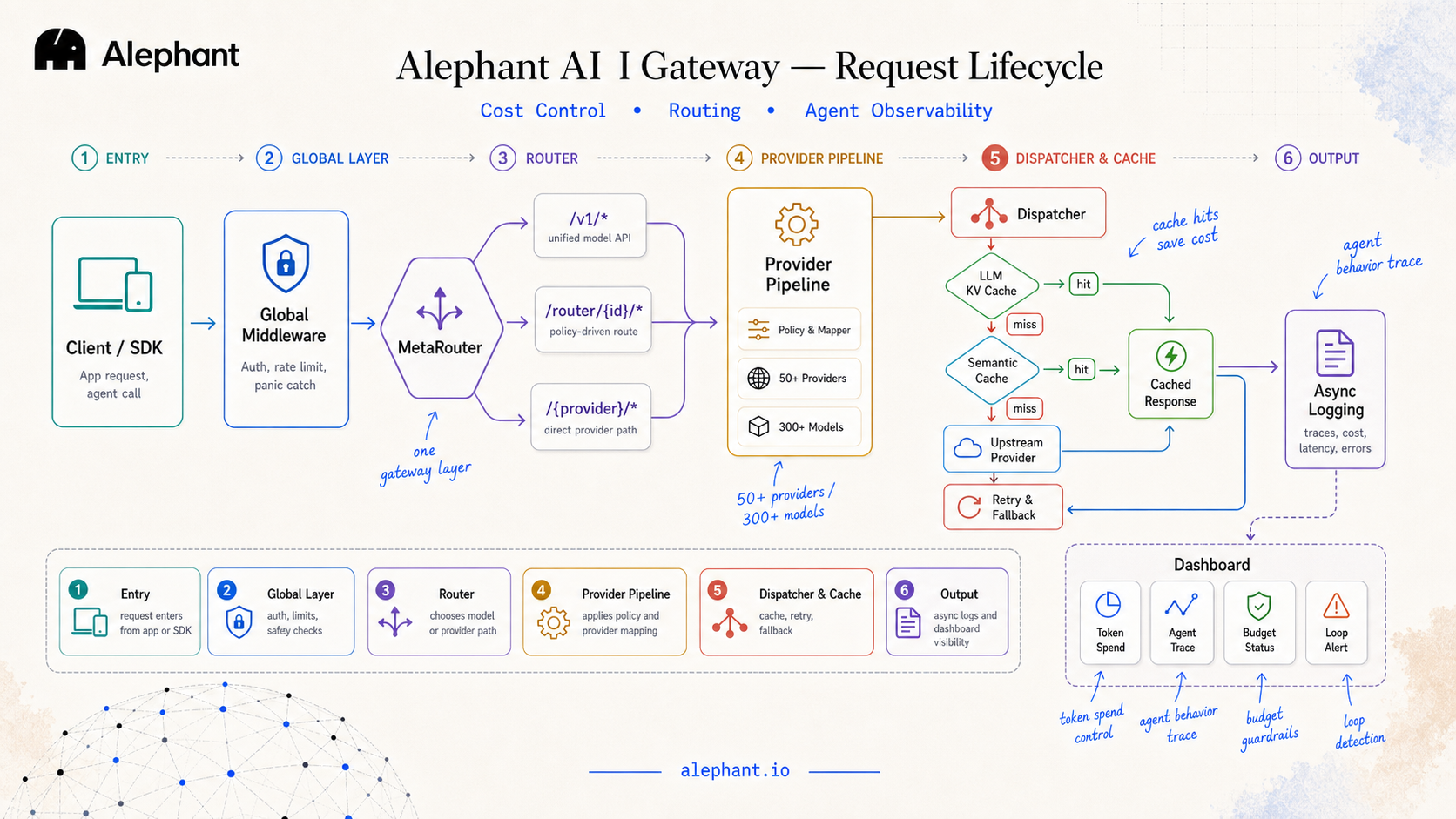

Architecture & request lifecycle

Every request passes through the same gateway lifecycle: global middleware, routing, provider mapping, dispatch, cache, fallback, and async logging. The entry path depends on how much control you want:

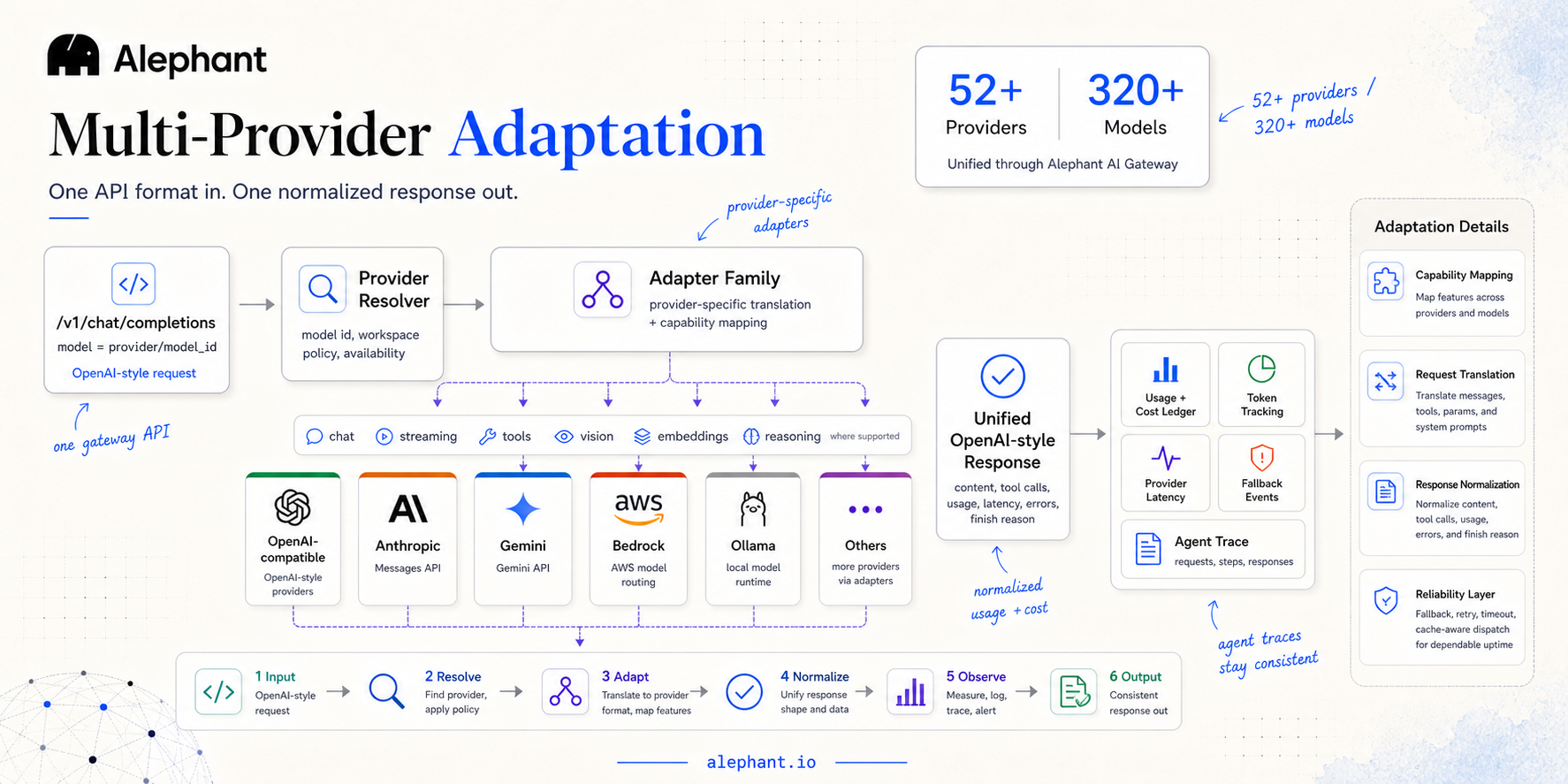

Multi-provider adaptation

Use one OpenAI-style request shape across 50+ providers and 320+ models, including OpenAI-compatible APIs, Anthropic Messages, Gemini, Bedrock, Ollama, OpenRouter-style catalogs, and custom backends. The client selects a runtime with model=provider/model_id; Alephant resolves the provider, applies the right adapter, maps provider-specific fields, and returns a normalized OpenAI-style response.

Instead of listing every model in the README, this section focuses on the contract: one request format in, one consistent response out. The provider and model catalog can evolve independently without forcing application code changes.

IDE integration

Alephant AI Gateway ships repository-level tooling for AI-assisted development inside supported IDEs.

Comparison

Portkey, Alephant, and LiteLLM are excellent projects, but they start from different centers of gravity. Alephant is built for teams shipping agentic AI products: a hosted SaaS workspace plus a self-hosted gateway path for agent development, cost control, provider routing, governance, and operational visibility.

Alephant’s differentiator is the combination: hosted SaaS, self-hosted Rust gateway, agent-first developer compatibility, cost-control workflows, BYO-key governance, explicit provider adaptation, and workspace-level AI FinOps.